|

For more information about callbacks, you can refer to these airflow official documentation and stack thread. In the above code, I passed one argument to the function instead of keyword arguments and the function worked as expected. T2 = BigQueryOperator( task_id='RunLoadJobWhenSourceModified', sql='select * from ', use_legacy_sql=False,on_success_callback=my_task_function) T3=BashOperator(task_id="secound",bash_command='echo end') T1=BashOperator(task_id="first",bash_command='echo starting') This extensibility is one of the many features which make Apache Airflow powerful. With DAG(dag_id='removed',start_date=datetime(2021, 4, 5, 15, as dag: Module Contents Classes BashOperator Execute a Bash script, command or set of commands. Airflow allows you to create new operators to suit the requirements of you or your team. If name in ('task1'): #If condition is used for PythonOperator Ti.xcom_push(key='task2_task_id', value=task_id)ĭef task3(ti,dag_id, task_id, run_id, task_state):

Ti.xcom_push(key='task2_run_id', value=run_id) Ti.xcom_push(key='task2_job_id', value=job_id) #task_status = ti.status # Pass the extracted values to the next task using XCom

Xvc = client.query(sql_str1,job_config=job_config).to_dataframe().values.tolist() def task2(ti, project):Ĭlient = bigquery.Client(project=bq_project) I am unable to make use of this object for fetching the task level details of a BigQueryOperator.Īpproach 1: Tried xcom_push and xcom_pull to fetch the details from task instance(ti). Use the task decorator to execute an arbitrary Python function. PythonOperator - calls an arbitrary Python function. Some popular operators from core include: BashOperator - executes a bash command. To my understanding, context object can be used to fetch these details as it is a dictionary that contains various attributes and metadata related to the current task execution. Airflow has a very extensive set of operators available, with some built-in to the core or pre-installed providers. job_id, task_id, run_id, state of a task and url of the tasks. In Task3, I require to fetch the task level information of the previous tasks(Task 1, Task 2) i.e.

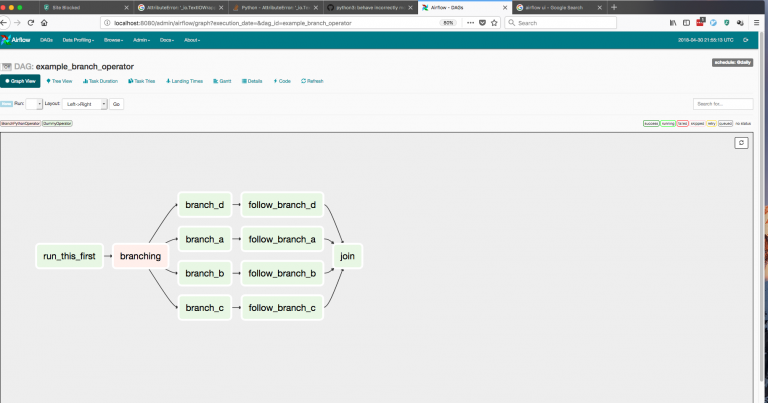

This doesn't support create Docker image dynamically. The only caveat is the image should exist beforehand. Task3 is triggered after Task1 and Task2. Among other Operators, Airflow provides DockerOperator, which creates a Docker container based on pre-built image and executes a command within that container. We deliver hardened solutions that make it easier for enterprises to work across platforms and environments, from the core datacenter to the network edge.I have a use case wherein we have 3 tasks Task1(BigqueryOperator),Task2(PythonOperator) and Task3(PythonOperator). We’re the world’s leading provider of enterprise open source solutions-including Linux, cloud, container, and Kubernetes. Apache Airflow Python DAG files can be used to automate workflows or data pipelines in Cloudera Data Engineering. 2) Do not use the PythonOperator (and other magic). The Red Hat Ecosystem Catalog is the official source for discovering and learning more about the Red Hat Ecosystem of both Red Hat and certified third-party products and services. Airflow should just run operators launching and asserting tasks running in a separate processing solution.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed